1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

140

141

142

143

144

145

146

147

148

149

150

151

152

153

154

155

156

157

158

159

160

161

162

163

164

165

166

167

168

169

170

171

172

173

174

175

176

177

178

179

180

181

182

183

184

185

186

187

188

189

190

191

192

193

194

195

196

197

198

199

200

201

202

203

204

205

206

207

208

209

210

211

212

213

214

215

216

217

218

219

220

221

222

223

224

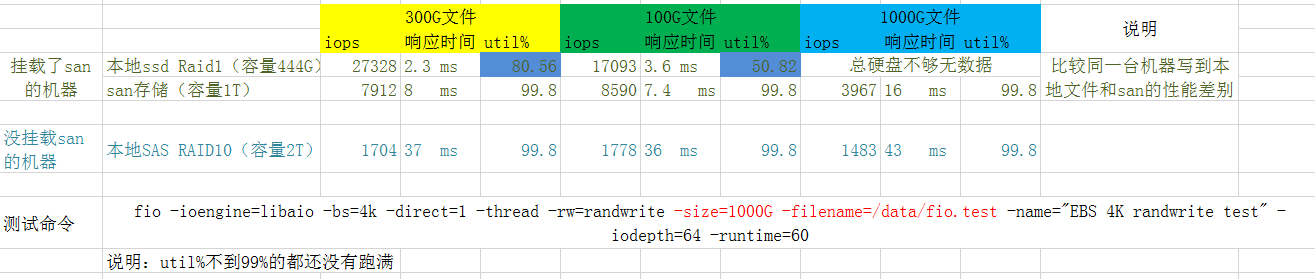

| [essd_pl3]# fio -ioengine=libaio -bs=4k -direct=1 -buffered=1 -thread -rw=randwrite -rwmixread=70 -size=160G -filename=./fio.test -name="EBS 4K randwrite test" -iodepth=64 -runtime=60

EBS 4K randwrite test: (g=0): rw=randwrite, bs=(R) 4096B-4096B, (W) 4096B-4096B, (T) 4096B-4096B, ioengine=libaio, iodepth=64

fio-3.7

Starting 1 thread

Jobs: 1 (f=1): [w(1)][100.0%][r=0KiB/s,w=566MiB/s][r=0,w=145k IOPS][eta 00m:00s]

EBS 4K randwrite test: (groupid=0, jobs=1): err= 0: pid=2416234: Thu Apr 7 17:03:07 2022

write: IOPS=96.2k, BW=376MiB/s (394MB/s)(22.0GiB/60000msec)

slat (usec): min=2, max=530984, avg= 8.27, stdev=1104.96

clat (usec): min=2, max=944103, avg=599.25, stdev=9230.93

lat (usec): min=7, max=944111, avg=607.60, stdev=9308.81

clat percentiles (usec):

| 1.00th=[ 392], 5.00th=[ 400], 10.00th=[ 404], 20.00th=[ 408],

| 30.00th=[ 412], 40.00th=[ 416], 50.00th=[ 420], 60.00th=[ 424],

| 70.00th=[ 433], 80.00th=[ 441], 90.00th=[ 457], 95.00th=[ 482],

| 99.00th=[ 627], 99.50th=[ 766], 99.90th=[ 1795], 99.95th=[ 4228],

| 99.99th=[488637]

bw ( KiB/s): min= 168, max=609232, per=100.00%, avg=422254.17, stdev=257181.75, samples=108

iops : min= 42, max=152308, avg=105563.63, stdev=64295.48, samples=108

lat (usec) : 4=0.01%, 10=0.01%, 50=0.01%, 100=0.01%, 250=0.01%

lat (usec) : 500=96.35%, 750=3.11%, 1000=0.26%

lat (msec) : 2=0.19%, 4=0.03%, 10=0.02%, 250=0.01%, 500=0.03%

lat (msec) : 750=0.01%, 1000=0.01%

cpu : usr=13.56%, sys=60.78%, ctx=1455, majf=0, minf=9743

IO depths : 1=0.1%, 2=0.1%, 4=0.1%, 8=0.1%, 16=0.1%, 32=0.1%, >=64=100.0%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.1%, >=64=0.0%

issued rwts: total=0,5771972,0,0 short=0,0,0,0 dropped=0,0,0,0

latency : target=0, window=0, percentile=100.00%, depth=64

Run status group 0 (all jobs):

WRITE: bw=376MiB/s (394MB/s), 376MiB/s-376MiB/s (394MB/s-394MB/s), io=22.0GiB (23.6GB), run=60000-60000msec

Disk stats (read/write):

vdb: ios=0/1463799, merge=0/7373, ticks=0/2011879, in_queue=2011879, util=27.85%

[essd_pl3]# fio -ioengine=libaio -bs=4k -direct=1 -buffered=1 -thread -rw=randread -rwmixread=70 -size=160G -filename=./fio.test -name="EBS 4K randwrite test" -iodepth=64 -runtime=60

EBS 4K randwrite test: (g=0): rw=randread, bs=(R) 4096B-4096B, (W) 4096B-4096B, (T) 4096B-4096B, ioengine=libaio, iodepth=64

fio-3.7

Starting 1 thread

Jobs: 1 (f=1): [r(1)][100.0%][r=15.9MiB/s,w=0KiB/s][r=4058,w=0 IOPS][eta 00m:00s]

EBS 4K randwrite test: (groupid=0, jobs=1): err= 0: pid=2441598: Thu Apr 7 17:05:10 2022

read: IOPS=3647, BW=14.2MiB/s (14.9MB/s)(855MiB/60001msec)

slat (usec): min=183, max=10119, avg=239.01, stdev=110.20

clat (usec): min=2, max=54577, avg=15170.17, stdev=1324.10

lat (usec): min=237, max=55110, avg=15409.34, stdev=1338.09

clat percentiles (usec):

| 1.00th=[13960], 5.00th=[14091], 10.00th=[14222], 20.00th=[14484],

| 30.00th=[14615], 40.00th=[14746], 50.00th=[14877], 60.00th=[15139],

| 70.00th=[15270], 80.00th=[15533], 90.00th=[16057], 95.00th=[16712],

| 99.00th=[20317], 99.50th=[22152], 99.90th=[26346], 99.95th=[30802],

| 99.99th=[52691]

bw ( KiB/s): min= 6000, max=17272, per=100.00%, avg=16511.28, stdev=1140.64, samples=105

iops : min= 1500, max= 4318, avg=4127.81, stdev=285.16, samples=105

lat (usec) : 4=0.01%, 250=0.01%, 500=0.01%, 750=0.01%, 1000=0.01%

lat (msec) : 2=0.01%, 4=0.01%, 10=0.01%, 20=98.91%, 50=1.05%

lat (msec) : 100=0.02%

cpu : usr=0.18%, sys=17.18%, ctx=219041, majf=0, minf=4215

IO depths : 1=0.1%, 2=0.1%, 4=0.1%, 8=0.1%, 16=0.1%, 32=0.1%, >=64=100.0%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.1%, >=64=0.0%

issued rwts: total=218835,0,0,0 short=0,0,0,0 dropped=0,0,0,0

latency : target=0, window=0, percentile=100.00%, depth=64

Run status group 0 (all jobs):

READ: bw=14.2MiB/s (14.9MB/s), 14.2MiB/s-14.2MiB/s (14.9MB/s-14.9MB/s), io=855MiB (896MB), run=60001-60001msec

Disk stats (read/write):

vdb: ios=218343/7992, merge=0/8876, ticks=50566/3749, in_queue=54315, util=88.08%

[essd_pl3]# fio -ioengine=libaio -bs=4k -direct=1 -buffered=1 -thread -rw=randrw -rwmixread=70 -size=160G -filename=./fio.test -name="EBS 4K randwrite test" -iodepth=64 -runtime=60

EBS 4K randwrite test: (g=0): rw=randrw, bs=(R) 4096B-4096B, (W) 4096B-4096B, (T) 4096B-4096B, ioengine=libaio, iodepth=64

fio-3.7

Starting 1 thread

Jobs: 1 (f=1): [m(1)][100.0%][r=15.7MiB/s,w=7031KiB/s][r=4007,w=1757 IOPS][eta 00m:00s]

EBS 4K randwrite test: (groupid=0, jobs=1): err= 0: pid=2641414: Thu Apr 7 17:21:10 2022

read: IOPS=3962, BW=15.5MiB/s (16.2MB/s)(929MiB/60001msec)

slat (usec): min=182, max=7194, avg=243.23, stdev=116.87

clat (usec): min=2, max=235715, avg=11020.01, stdev=3366.61

lat (usec): min=253, max=235991, avg=11263.40, stdev=3375.49

clat percentiles (msec):

| 1.00th=[ 9], 5.00th=[ 10], 10.00th=[ 10], 20.00th=[ 11],

| 30.00th=[ 11], 40.00th=[ 11], 50.00th=[ 11], 60.00th=[ 12],

| 70.00th=[ 12], 80.00th=[ 12], 90.00th=[ 13], 95.00th=[ 14],

| 99.00th=[ 16], 99.50th=[ 18], 99.90th=[ 31], 99.95th=[ 36],

| 99.99th=[ 234]

bw ( KiB/s): min=10808, max=17016, per=100.00%, avg=15977.89, stdev=895.35, samples=118

iops : min= 2702, max= 4254, avg=3994.47, stdev=223.85, samples=118

write: IOPS=1701, BW=6808KiB/s (6971kB/s)(399MiB/60001msec)

slat (usec): min=3, max=221631, avg=10.16, stdev=693.59

clat (usec): min=486, max=235772, avg=11029.42, stdev=3590.93

lat (usec): min=493, max=235780, avg=11039.67, stdev=3659.04

clat percentiles (msec):

| 1.00th=[ 9], 5.00th=[ 10], 10.00th=[ 10], 20.00th=[ 11],

| 30.00th=[ 11], 40.00th=[ 11], 50.00th=[ 11], 60.00th=[ 12],

| 70.00th=[ 12], 80.00th=[ 12], 90.00th=[ 13], 95.00th=[ 14],

| 99.00th=[ 16], 99.50th=[ 18], 99.90th=[ 31], 99.95th=[ 37],

| 99.99th=[ 234]

bw ( KiB/s): min= 4480, max= 7728, per=100.00%, avg=6862.60, stdev=475.79, samples=118

iops : min= 1120, max= 1932, avg=1715.64, stdev=118.97, samples=118

lat (usec) : 4=0.01%, 500=0.01%, 750=0.01%

lat (msec) : 2=0.01%, 4=0.01%, 10=20.77%, 20=78.89%, 50=0.31%

lat (msec) : 100=0.01%, 250=0.02%

cpu : usr=0.65%, sys=7.20%, ctx=239089, majf=0, minf=8292

IO depths : 1=0.1%, 2=0.1%, 4=0.1%, 8=0.1%, 16=0.1%, 32=0.1%, >=64=100.0%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.1%, >=64=0.0%

issued rwts: total=237743,102115,0,0 short=0,0,0,0 dropped=0,0,0,0

latency : target=0, window=0, percentile=100.00%, depth=64

Run status group 0 (all jobs):

READ: bw=15.5MiB/s (16.2MB/s), 15.5MiB/s-15.5MiB/s (16.2MB/s-16.2MB/s), io=929MiB (974MB), run=60001-60001msec

WRITE: bw=6808KiB/s (6971kB/s), 6808KiB/s-6808KiB/s (6971kB/s-6971kB/s), io=399MiB (418MB), run=60001-60001msec

Disk stats (read/write):

vdb: ios=237216/118960, merge=0/8118, ticks=55191/148225, in_queue=203416, util=99.35%

[essd_pl3]# fio -bs=4k -direct=1 -buffered=0 -thread -rw=randwrite -rwmixread=70 -size=16G -filename=./fio.test -name="EBS 4K randwrite test" -iodepth=64 -runtime=30

EBS 4K randwrite test: (g=0): rw=randwrite, bs=(R) 4096B-4096B, (W) 4096B-4096B, (T) 4096B-4096B, ioengine=psync, iodepth=64

fio-3.7

Starting 1 thread

Jobs: 1 (f=1): [w(1)][100.0%][r=0KiB/s,w=28.3MiB/s][r=0,w=7249 IOPS][eta 00m:00s]

EBS 4K randwrite test: (groupid=0, jobs=1): err= 0: pid=2470117: Fri Apr 8 15:35:20 2022

write: IOPS=7222, BW=28.2MiB/s (29.6MB/s)(846MiB/30001msec)

clat (usec): min=115, max=7155, avg=137.29, stdev=68.48

lat (usec): min=115, max=7156, avg=137.36, stdev=68.49

clat percentiles (usec):

| 1.00th=[ 121], 5.00th=[ 123], 10.00th=[ 125], 20.00th=[ 126],

| 30.00th=[ 127], 40.00th=[ 129], 50.00th=[ 130], 60.00th=[ 133],

| 70.00th=[ 135], 80.00th=[ 139], 90.00th=[ 149], 95.00th=[ 163],

| 99.00th=[ 255], 99.50th=[ 347], 99.90th=[ 668], 99.95th=[ 947],

| 99.99th=[ 3589]

bw ( KiB/s): min=23592, max=30104, per=99.95%, avg=28873.29, stdev=1084.49, samples=59

iops : min= 5898, max= 7526, avg=7218.32, stdev=271.12, samples=59

lat (usec) : 250=98.95%, 500=0.81%, 750=0.17%, 1000=0.03%

lat (msec) : 2=0.02%, 4=0.02%, 10=0.01%

cpu : usr=0.72%, sys=5.08%, ctx=216767, majf=0, minf=148

IO depths : 1=100.0%, 2=0.0%, 4=0.0%, 8=0.0%, 16=0.0%, 32=0.0%, >=64=0.0%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

issued rwts: total=0,216677,0,0 short=0,0,0,0 dropped=0,0,0,0

latency : target=0, window=0, percentile=100.00%, depth=64

Run status group 0 (all jobs):

WRITE: bw=28.2MiB/s (29.6MB/s), 28.2MiB/s-28.2MiB/s (29.6MB/s-29.6MB/s), io=846MiB (888MB), run=30001-30001msec

Disk stats (read/write):

vdb: ios=0/219122, merge=0/3907, ticks=0/29812, in_queue=29812, util=99.52%

[root@hygon8 14:44 /polarx/lvm]

#fio -bs=4k -direct=1 -buffered=0 -thread -rw=randwrite -rwmixread=70 -size=16G -filename=./fio.test -name="EBS 4K randwrite test" -iodepth=64 -runtime=30

EBS 4K randwrite test: (g=0): rw=randwrite, bs=4K-4K/4K-4K/4K-4K, ioengine=sync, iodepth=64

fio-2.2.8

Starting 1 thread

Jobs: 1 (f=1): [w(1)] [100.0% done] [0KB/157.2MB/0KB /s] [0/40.3K/0 iops] [eta 00m:00s]

EBS 4K randwrite test: (groupid=0, jobs=1): err= 0: pid=3486352: Fri Apr 8 14:45:43 2022

write: io=4710.4MB, bw=160775KB/s, iops=40193, runt= 30001msec

clat (usec): min=18, max=4164, avg=22.05, stdev= 7.33

lat (usec): min=19, max=4165, avg=22.59, stdev= 7.36

clat percentiles (usec):

| 1.00th=[ 20], 5.00th=[ 20], 10.00th=[ 21], 20.00th=[ 21],

| 30.00th=[ 21], 40.00th=[ 21], 50.00th=[ 21], 60.00th=[ 22],

| 70.00th=[ 22], 80.00th=[ 22], 90.00th=[ 23], 95.00th=[ 25],

| 99.00th=[ 36], 99.50th=[ 40], 99.90th=[ 62], 99.95th=[ 99],

| 99.99th=[ 157]

bw (KB /s): min=147568, max=165400, per=100.00%, avg=160803.12, stdev=2704.22

lat (usec) : 20=0.08%, 50=99.70%, 100=0.17%, 250=0.04%, 500=0.01%

lat (usec) : 750=0.01%, 1000=0.01%

lat (msec) : 2=0.01%, 10=0.01%

cpu : usr=6.95%, sys=31.18%, ctx=1205994, majf=0, minf=1573

IO depths : 1=100.0%, 2=0.0%, 4=0.0%, 8=0.0%, 16=0.0%, 32=0.0%, >=64=0.0%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

issued : total=r=0/w=1205849/d=0, short=r=0/w=0/d=0, drop=r=0/w=0/d=0

latency : target=0, window=0, percentile=100.00%, depth=64

Run status group 0 (all jobs):

WRITE: io=4710.4MB, aggrb=160774KB/s, minb=160774KB/s, maxb=160774KB/s, mint=30001msec, maxt=30001msec

Disk stats (read/write):

dm-2: ios=0/1204503, merge=0/0, ticks=0/15340, in_queue=15340, util=50.78%, aggrios=0/603282, aggrmerge=0/463, aggrticks=0/8822, aggrin_queue=0, aggrutil=28.66%

nvme0n1: ios=0/683021, merge=0/474, ticks=0/9992, in_queue=0, util=28.66%

nvme1n1: ios=0/523543, merge=0/452, ticks=0/7652, in_queue=0, util=21.67%

[root@x86.170 /polarx/lvm]

#/usr/sbin/nvme list

Node SN Model Namespace Usage Format FW Rev

---------------- -------------------- ---------------------------------------- --------- -------------------------- ---------------- --------

/dev/nvme0n1 BTLJ932205P44P0DGN INTEL SSDPE2KX040T8 1 3.84 TB / 3.84 TB 512 B + 0 B VDV10131

/dev/nvme1n1 BTLJ932207H04P0DGN INTEL SSDPE2KX040T8 1 3.84 TB / 3.84 TB 512 B + 0 B VDV10131

/dev/nvme2n1 BTLJ932205AS4P0DGN INTEL SSDPE2KX040T8 1 3.84 TB / 3.84 TB 512 B + 0 B VDV10131

[root@x86.170 /polarx/lvm]

#fio -bs=4k -direct=1 -buffered=0 -thread -rw=randwrite -rwmixread=70 -size=16G -filename=./fio.test -name="EBS 4K randwrite test" -iodepth=64 -runtime=30

EBS 4K randwrite test: (g=0): rw=randwrite, bs=4K-4K/4K-4K/4K-4K, ioengine=sync, iodepth=64

fio-2.2.8

Starting 1 thread

Jobs: 1 (f=1): [w(1)] [100.0% done] [0KB/240.2MB/0KB /s] [0/61.5K/0 iops] [eta 00m:00s]

EBS 4K randwrite test: (groupid=0, jobs=1): err= 0: pid=11516: Fri Apr 8 15:44:36 2022

write: io=7143.3MB, bw=243813KB/s, iops=60953, runt= 30001msec

clat (usec): min=10, max=818, avg=14.96, stdev= 4.14

lat (usec): min=10, max=818, avg=15.14, stdev= 4.15

clat percentiles (usec):

| 1.00th=[ 11], 5.00th=[ 12], 10.00th=[ 12], 20.00th=[ 14],

| 30.00th=[ 15], 40.00th=[ 15], 50.00th=[ 15], 60.00th=[ 15],

| 70.00th=[ 15], 80.00th=[ 16], 90.00th=[ 16], 95.00th=[ 16],

| 99.00th=[ 20], 99.50th=[ 32], 99.90th=[ 78], 99.95th=[ 84],

| 99.99th=[ 105]

bw (KB /s): min=236768, max=246424, per=99.99%, avg=243794.17, stdev=1736.82

lat (usec) : 20=98.96%, 50=0.73%, 100=0.29%, 250=0.01%, 500=0.01%

lat (usec) : 750=0.01%, 1000=0.01%

cpu : usr=10.65%, sys=42.66%, ctx=1828699, majf=0, minf=7

IO depths : 1=100.0%, 2=0.0%, 4=0.0%, 8=0.0%, 16=0.0%, 32=0.0%, >=64=0.0%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

issued : total=r=0/w=1828662/d=0, short=r=0/w=0/d=0, drop=r=0/w=0/d=0

latency : target=0, window=0, percentile=100.00%, depth=64

Run status group 0 (all jobs):

WRITE: io=7143.3MB, aggrb=243813KB/s, minb=243813KB/s, maxb=243813KB/s, mint=30001msec, maxt=30001msec

Disk stats (read/write):

dm-0: ios=0/1823575, merge=0/0, ticks=0/13666, in_queue=13667, util=45.56%, aggrios=0/609558, aggrmerge=0/2, aggrticks=0/4280, aggrin_queue=4198, aggrutil=14.47%

nvme0n1: ios=0/609144, merge=0/6, ticks=0/4438, in_queue=4353, util=14.47%

nvme1n1: ios=0/609470, merge=0/0, ticks=0/4186, in_queue=4109, util=13.65%

nvme2n1: ios=0/610060, merge=0/0, ticks=0/4216, in_queue=4134, util=13.74%

|

![[中国赞]](/images/951413iMgBlog/2018new_zhongguozan_org.png) ,比我厉害1万倍

,比我厉害1万倍